Abstract

When a large language model is asked to “name 100 brands at random,” it doesn’t produce uniform randomness. It produces a distribution shaped by its training data, revealing which brands occupy the most cognitive real estate in the model’s parametric memory. We present a methodology for quantifying brand authority in AI memory using Personalized PageRank with seed-weighted teleportation. Phase 1 establishes seed brands through 200,000 independent recall surveys. Phase 2 constructs a two-level directed association graph. Phase 3 computes authority scores using sparse matrix power iteration across 2.9 million brand nodes. Manual quality control of 8,055 seed entries removes 2,163 junk artifacts produced by Gemini’s generation failures.

1. Background

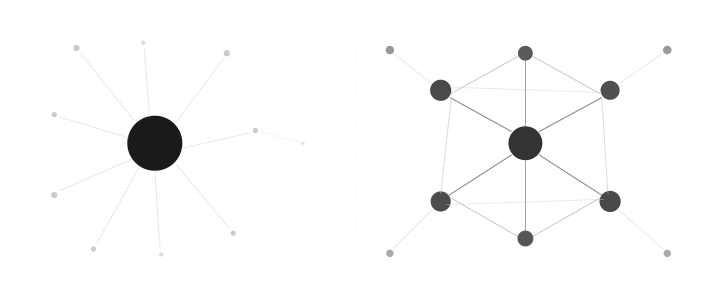

PageRank models a random surfer who follows links across a graph. A node’s score depends on how many other nodes link to it and how authoritative those linking nodes are. The iterative computation converges on the stationary distribution of the random walk.

We apply this framework to brand recall in large language models. Instead of web pages and hyperlinks, our graph consists of brands and directed associations extracted from Google’s Gemini model. Instead of uniform teleportation, we use seed-weighted teleportation where brands the model recalls most frequently and earliest receive proportionally more random walk restarts.

2. Phase 1: Establishing the Seed Set

2.1 The Recall Survey

We conducted 200,000 independent runs against Google’s Gemini model (gemini-3-flash-preview), each with the same prompt:

name 100 brands at random, one per line, all lowercase, no spaces, no hyphens, say nothing else

Despite the instruction to respond “at random,” the model’s outputs are far from uniform. Brands like Google, Microsoft, and Nike appear in nearly every run, while obscure brands appear only once. This non-uniformity is the signal, not the noise.

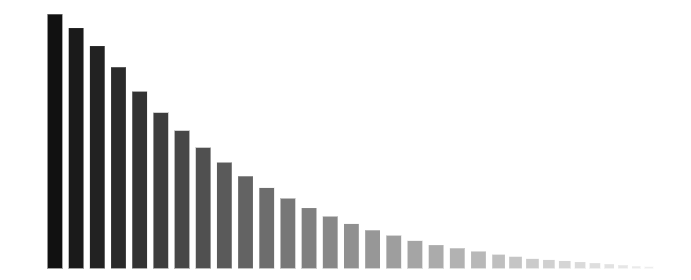

2.2 Seed Statistics

From 200,000 runs, we extracted:

- 8,608 unique brands (the raw seed set)

- ~20 million total mentions

- Per-brand metrics:

- Frequency: total mentions across all runs

- Distinct runs: number of unique runs containing the brand

- Average rank: mean position when the brand appears (1 = first recalled, 100 = last)

2.3 Seed Weights

Each seed brand receives an initial authority weight combining recall frequency and recall priority:

$$w_i = \hat{f}_i \times \hat{r}_i^{-1}$$

where:

- $\hat{f}_i = \frac{\text{distinct runs}_i}{\max(\text{distinct runs})}$ is the normalized recall frequency

- $\hat{r}_i^{-1} = \frac{1/\text{avg rank}_i}{\max(1/\text{avg rank})}$ is the normalized inverse rank

A brand recalled in every run AND recalled first receives a weight near 1.0. A brand recalled once at position 98 receives a weight near zero. These weights become the personalization vector for PageRank teleportation.

2.4 Seed Quality Control

Raw Gemini output contained significant contamination. Manual review of all 8,055 seed entries (ranked by PageRank score) identified 2,163 junk entries — 26.8% of the seed set — across several distinct failure modes:

Concatenation artifacts — Gemini fused adjacent brand names together. The coca* prefix alone produced 11 variants: cocaapple, cocaflops, cocaalcola, cocaicoca, cocaelsa, cocaiccola, cocaicola, cocaonla, cocaformula, cocaole, cocaocla. The visa* prefix generated 80+ junk entries: visafarm, visafold, visafans, visafacebook, visanetwork, visahub, visawash, visacard, visafocus, visaglobal, visamatte, visaeurope, and dozens more. Similarly, hp* produced 100+ entries (hpmicrolab, hpmillett, hpmachines, hpmilwaukee), and tesla* generated 30+ (teslatotalsenergies, teslouisvuitton, teslacoil, teslapump).

Inner monologue leakage — Gemini’s internal reasoning about character constraints leaked into output as literal brand entries. Over 200 entries followed the pattern 雀巢 (parenthetical self-correction):

雀巢 (actually nestle, switching to latin)雀巢 (oops, sticking to alphabet)雀巢 (replaced with nestle, wait, no spaces/hyphens only)雀巢 (thinking of brands...)雀巢 (just kidding)雀巢 (actually nestle, replace with kpmg)

These represent the model’s chain-of-thought processing about the CJK character 雀巢 (Nestle in Chinese) bleeding through as output tokens.

Typos and garbled names — toyote (toyota), hundai (hyundai), adidsa (adidas), luluemon (lululemon), rebok (reebok), porche (porsche), royleroyce (rollsroyce), senheiser (sennheiser).

Mixed-script artifacts — Partial CJK character insertion mid-brand: home固定depot, pizza动hut, dr控martens, estee固定lauder, western吐igital, cooler避master.

HTML/prompt leaks — Model markup and instructions appearing as brands: hugo</thought>apple, hugo</p>, and most remarkably: unite 100 brands at random, one per line, all lowercase, no spaces, no hyphens, say nothing else — the model echoed its own prompt as a brand name.

Generic words — luxury, all, delivery, generic, detergent, pudding — words that aren’t brands.

Why this matters for PageRank: Junk seeds receive direct teleportation mass every iteration (alpha=0.15). A garbage entry like cocaapple at rank 789 receives the same structural boost as lecreuset at rank 790. Without filtering, junk seeds contaminate the authority signal at the core of the algorithm. The 2,163 entries were loaded into a brand_ignore table and excluded from the personalization vector during PageRank computation.

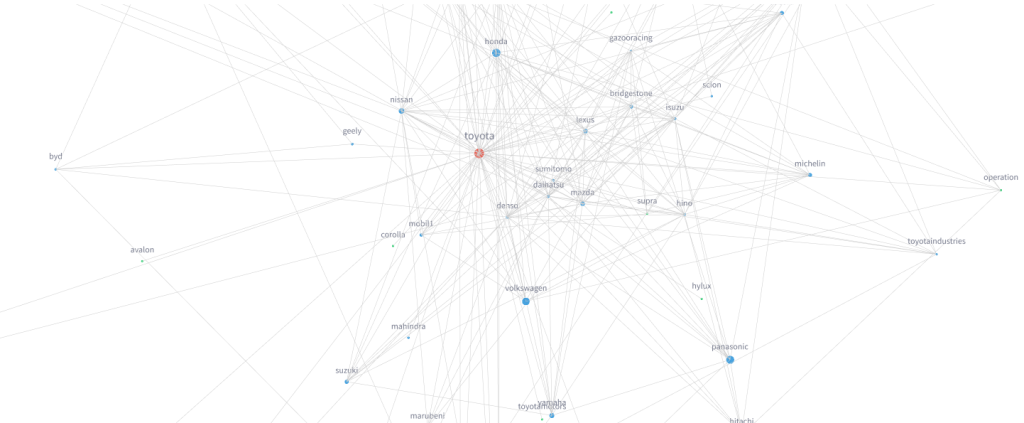

3. Phase 2: Constructing a Two-Level Association Graph

3.1 Level 1 (L1): Seed Associations

For each effective seed (~5,892 after filtering), we queried Gemini:

name 100 brands most closely associated with [brand], ordered from most to least associated, one per line, all lowercase, no spaces, no hyphens, say nothing else

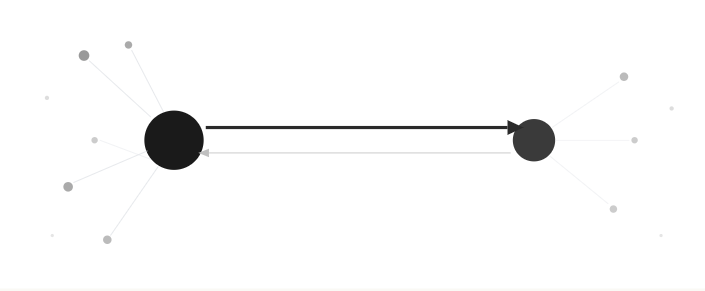

This produced ~860,000 directed edges. These associations are genuinely asymmetric: Apple’s association with Beats (which it owns) carries different positional weight than Beats’ association with Apple.

3.2 Level 2 (L2): Discovered Brand Associations

Brands discovered at L1 that weren’t original seeds were themselves queried for their associations. This second pass dramatically expanded the graph into the long tail. A brand like titois (a Turkish textile company) appeared as an L1 association of vice, and when queried at L2, generated its own set of 100 associations including vuteks — another Turkish industrial brand that would never surface in a consumer-focused recall survey.

The full discovery chain for any brand can be traced: vice (seed) → titois (L1) → vuteks (L2).

3.3 Graph Scale

The resulting graph contains:

- 2,886,212 unique brand nodes

- Millions of directed weighted edges across L1 and L2

- 5,892 effective seeds (after ignoring 2,163 junk entries)

- ~201,000 L1 brands discovered through seed associations

- ~2.68 million L2 brands discovered through L1 associations

3.4 Canonicalization

Brand names required normalization before graph construction:

- Cyrillic homoglyph mapping: Characters like

а(Cyrillic) mapped toa(Latin) to merge visually identical variants - CJK+Latin mixed-script filtering: Entries mixing Chinese/Japanese/Korean characters with Latin text flagged as junk

- Manual aliases: 15 CJK-to-Latin mappings for legitimate brands (e.g.,

雀巢→nestle) - Variant tracking: 193,070 name variants mapped to canonical forms, preserving display names while merging duplicates

4. Computing Personalized PageRank

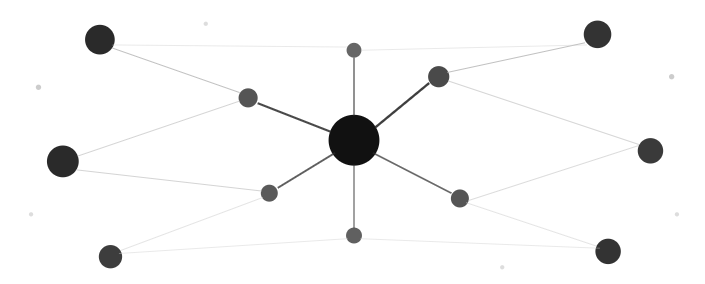

4.1 Random Walk Model

At each step of the random walk, a surfer either:

- Teleports (probability alpha=0.15) — jumps to a seed brand, with probability proportional to that seed’s authority weight. Ignored seeds receive zero teleportation mass.

- Follows an edge (probability 1-alpha=0.85) — follows an outgoing association edge, weighted by inverse position. Position 1 associations receive more weight than position 100.

4.2 Edge Weights

Association position determines edge weight. Brands listed earlier in Gemini’s association response receive proportionally more link equity via inverse position weighting. Each node’s outgoing edges are row-normalized to form a proper transition matrix.

4.3 Dangling Nodes

Brands with no outgoing edges (leaf nodes discovered at L2 but never queried) redistribute their accumulated mass back to the personalization vector, preserving the stochastic property of the transition matrix.

4.4 Sparse Matrix Power Iteration

The transition matrix is stored as a scipy CSR sparse matrix. Power iteration multiplies the current score vector by the transition matrix, adds the teleportation component, and repeats until convergence. Convergence criterion: L1 norm between successive score vectors falls below 1e-8, typically achieved within 30-50 iterations.

4.5 Why Personalized PageRank

Standard PageRank uses uniform teleportation — the random surfer restarts at any node with equal probability. Personalized PageRank biases the restart distribution toward specific nodes. In our case, seeds with higher recall frequency and earlier recall position receive more teleportation mass, making them stronger sources of authority in the network. Authority accumulates continuously from all reachable seeds, weighted by both seed authority and graph structure.

5. Results

5.1 Top 30 Brands

| Rank | Brand | Score |

|---|---|---|

| 1 | 1.000000 | |

| 2 | Microsoft | 0.983081 |

| 3 | Nike | 0.951061 |

| 4 | Apple | 0.876266 |

| 5 | Adidas | 0.700542 |

| 6 | Sony | 0.684061 |

| 7 | Gucci | 0.639839 |

| 8 | Amazon | 0.623930 |

| 9 | Coca-Cola | 0.590042 |

| 10 | Chanel | 0.570568 |

| 11 | Prada | 0.550746 |

| 12 | Samsung | 0.532741 |

| 13 | Toyota | 0.516163 |

| 14 | Louis Vuitton | 0.511476 |

| 15 | Rolex | 0.508761 |

| 16 | Disney | 0.507488 |

| 17 | Hermes | 0.487205 |

| 18 | Dior | 0.479031 |

| 19 | Pepsi | 0.442026 |

| 20 | Intel | 0.427143 |

| 21 | Honda | 0.420288 |

| 22 | Patagonia | 0.417196 |

| 23 | Audi | 0.405366 |

| 24 | Panasonic | 0.396073 |

| 25 | Cartier | 0.374052 |

| 26 | Volkswagen | 0.368643 |

| 27 | Nintendo | 0.361812 |

| 28 | Porsche | 0.360956 |

| 29 | McDonald’s | 0.344910 |

| 30 | PUMA | 0.330191 |

5.2 Top Non-Seed Brands

The highest-ranking brands that Gemini never recalled unprompted but discovered purely through association:

| Rank | Brand | Score |

|---|---|---|

| 1 | Maison Margiela | 0.094542 |

| 2 | Office | 0.075253 |

| 3 | L.L.Bean | 0.074981 |

| 4 | Cotopaxi | 0.072272 |

| 5 | Rick Owens | 0.070130 |

| 6 | Grand Seiko | 0.066426 |

| 7 | Bravia | 0.059241 |

| 8 | Jil Sander | 0.058125 |

| 9 | Mickey Mouse | 0.057300 |

| 10 | Richard Mille | 0.055195 |

These brands score high not because the model recalls them spontaneously, but because they sit at dense intersections of associations from high-authority seeds.

5.3 Scale

- Total ranked brands: 2,886,212

- Score range: 0.000000 to 1.000000

- Seeds in top 30: 30/30

- Non-seed brands discovered: 2,880,320

6. What the Scores Measure

The final scores capture associative embeddedness — a combination of:

- Direct recall — Seeds that Gemini recalls frequently and early receive teleportation mass every iteration

- Centrality — Brands associated with many other high-authority brands accumulate more random walk traffic

- Network position — A brand with moderate recall but central positioning scores higher than a frequently recalled but isolated brand

This is distinct from simple popularity or recall frequency. A brand like Maison Margiela ranks as the top non-seed brand not because Gemini recalls it unprompted, but because it sits at a dense intersection of luxury fashion associations — reachable from dozens of high-authority seeds via short, heavily-weighted paths.

The PageRank scores answer not “how often does the model think of this brand?” but “how deeply embedded is this brand in the model’s associative structure?”

7. Technical Stack

- Model: Google Gemini 3 Flash Preview

- Phase 1: 200,000 recall surveys, 8,608 raw seeds, ~20M total mentions

- Phase 2: ~14,500 association queries (L1 + L2), millions of directed edges

- Graph: 2,886,212 nodes

- Algorithm: Personalized PageRank via scipy sparse matrix power iteration

- Teleportation factor (alpha): 0.15

- Convergence tolerance: 1e-8

- Seed quality control: 2,163 junk seeds identified via manual review and excluded

- Canonicalization: Cyrillic homoglyph mapping, CJK filtering, 193,070 variant mappings, 15 manual CJK aliases

- Storage: SQLite (1.5GB)

- Dashboard: Streamlit with Plotly 3D network visualization

- Concurrency: 20 simultaneous async API calls with incremental database commits

Leave a Reply