Category: Google

-

Google’s (still) doesn’t see your live page.

I’ll keep this short as I’ve covered this topic extensively in the past. When you ask Gemini to access a specific URL or interact with it inside AI Mode search it works from Google’s web cache. For this website’s home page this is what it has as context to ground the model about the page:…

-

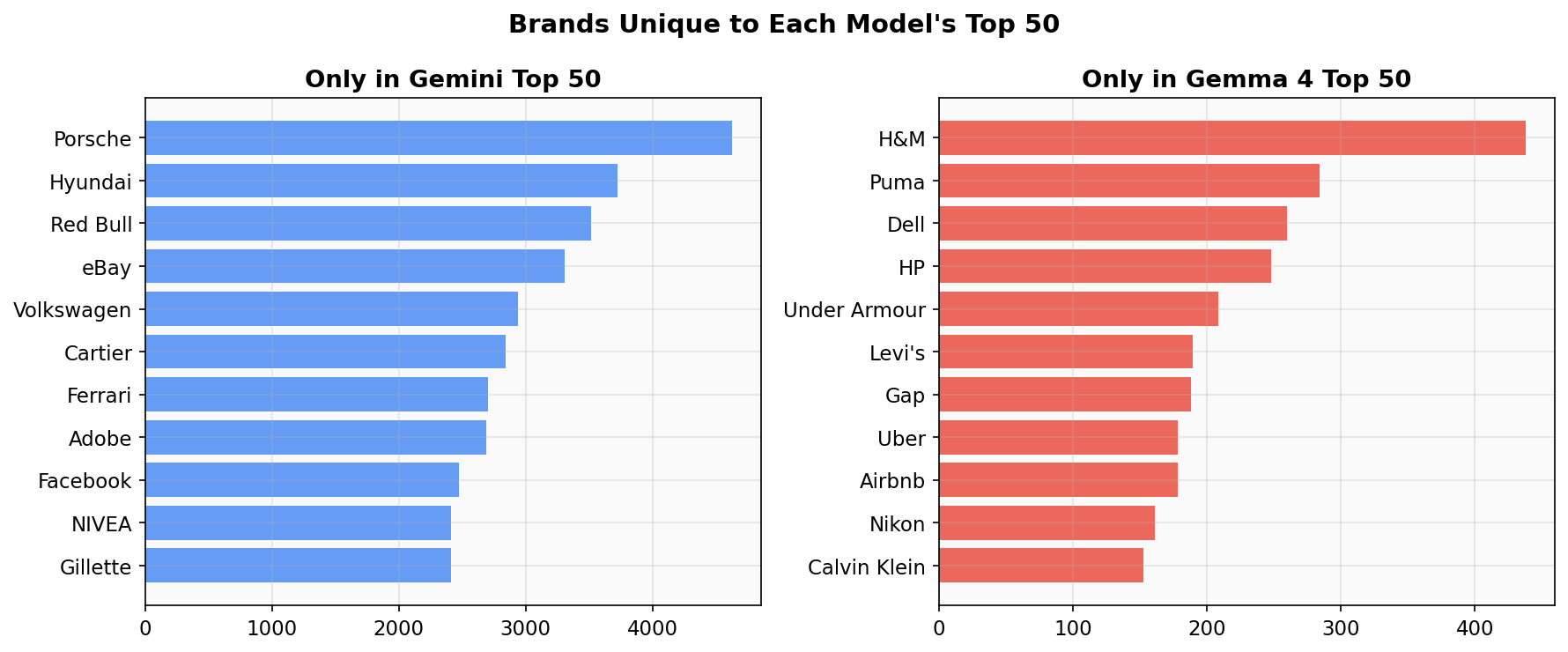

Gemma 4 Brand Authority Map

We asked Google’s open-weight model Gemma 4 (31B) to “name 100 brands at random” 14,044 times and compared the results to our earlier Gemini 3 Flash experiment (200,000 runs). Of the top 50 brands in each model, 39 overlap. The 11 that are unique to each reveal a pattern: Gemini remembers luxury and automotive (Porsche,…

-

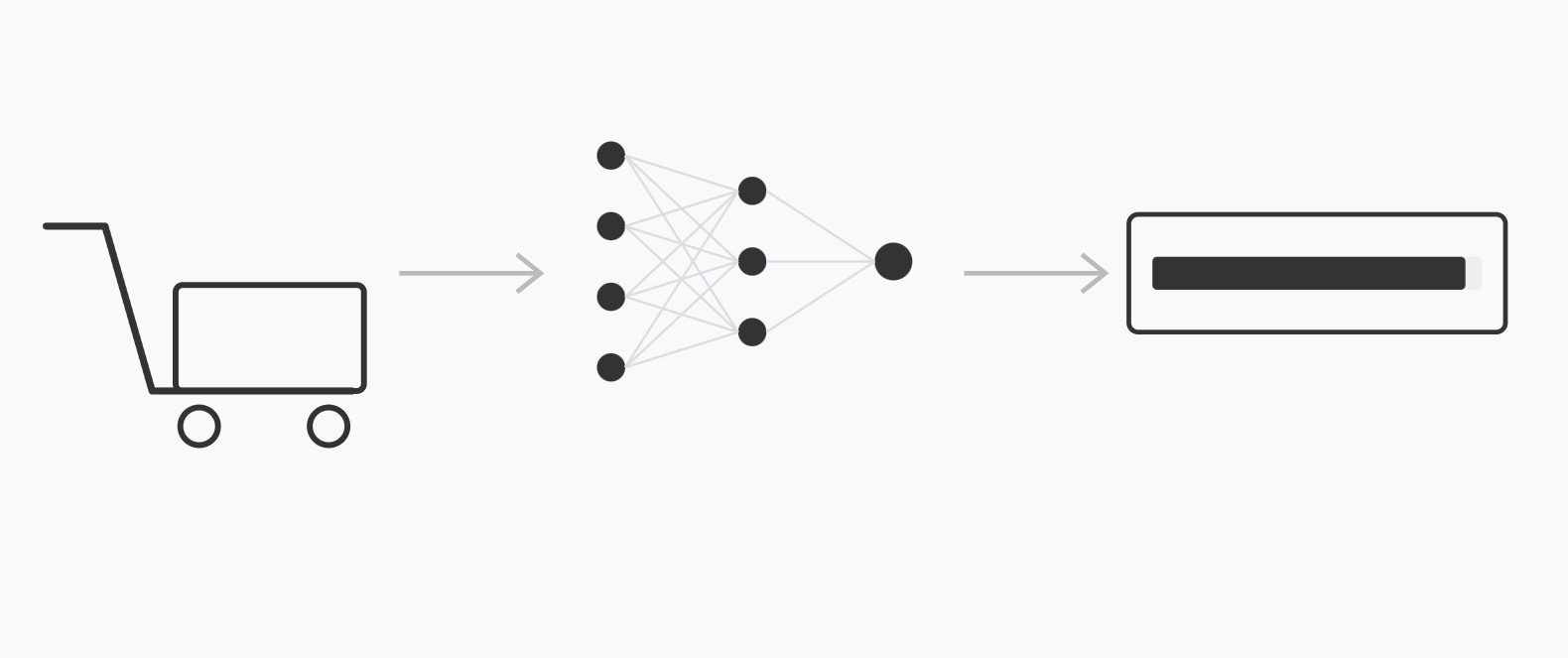

Chrome’s New Shopping Classifier

One of our AI SEO hall-of-famers, Olivier de Segonzac from RESONEO has managed to gain access to Google’s shopping classifier model. We’ve examined the model, reverse engineered its inference pipeline and this article is what we found. Model Demo Below is a real-world implementation of the model tested by loading a shopping-related page and following…

-

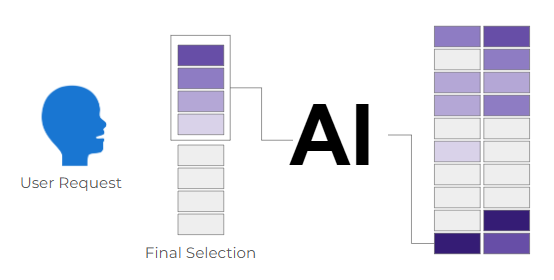

AI Brand Authority Index: Ranking 2.9 Million Brands by Associative Embeddedness in Gemini’s Memory

Abstract When a large language model is asked to “name 100 brands at random,” it doesn’t produce uniform randomness. It produces a distribution shaped by its training data, revealing which brands occupy the most cognitive real estate in the model’s parametric memory. We present a methodology for quantifying brand authority in AI memory using Personalized…

-

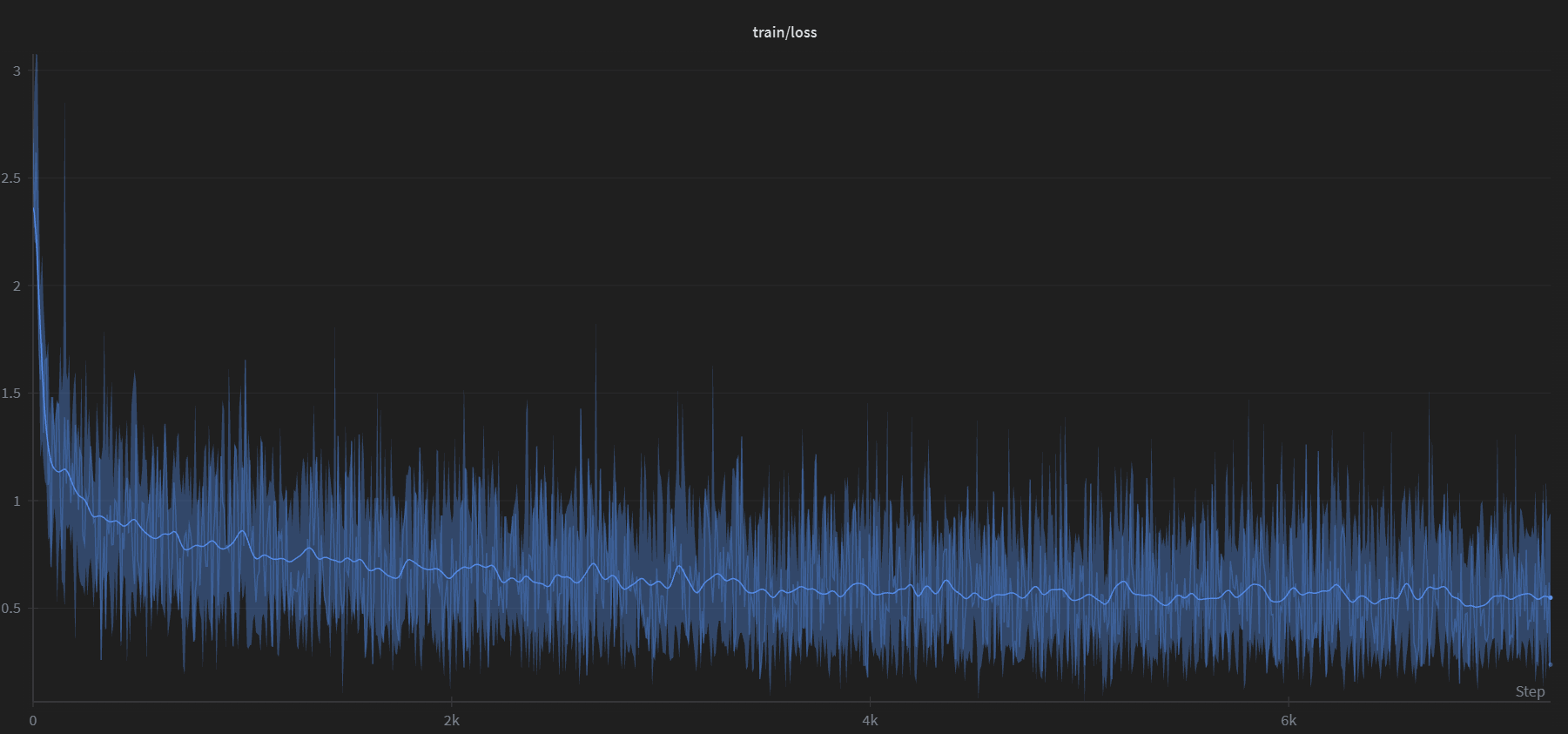

Reverse Prompting: Reconstructing Prompts from AI-Generated Text

We fine-tuned Google’s Gemma 3 (270M) to reverse the typical LLM workflow: given an AI-generated response, the model reconstructs the most likely prompt that produced it. We generated 100,000 synthetic prompt-response pairs using Gemini 2.5 Flash, trained for a single epoch on a consumer GPU, and built a Streamlit app that sweeps 24 decoding configurations…

-

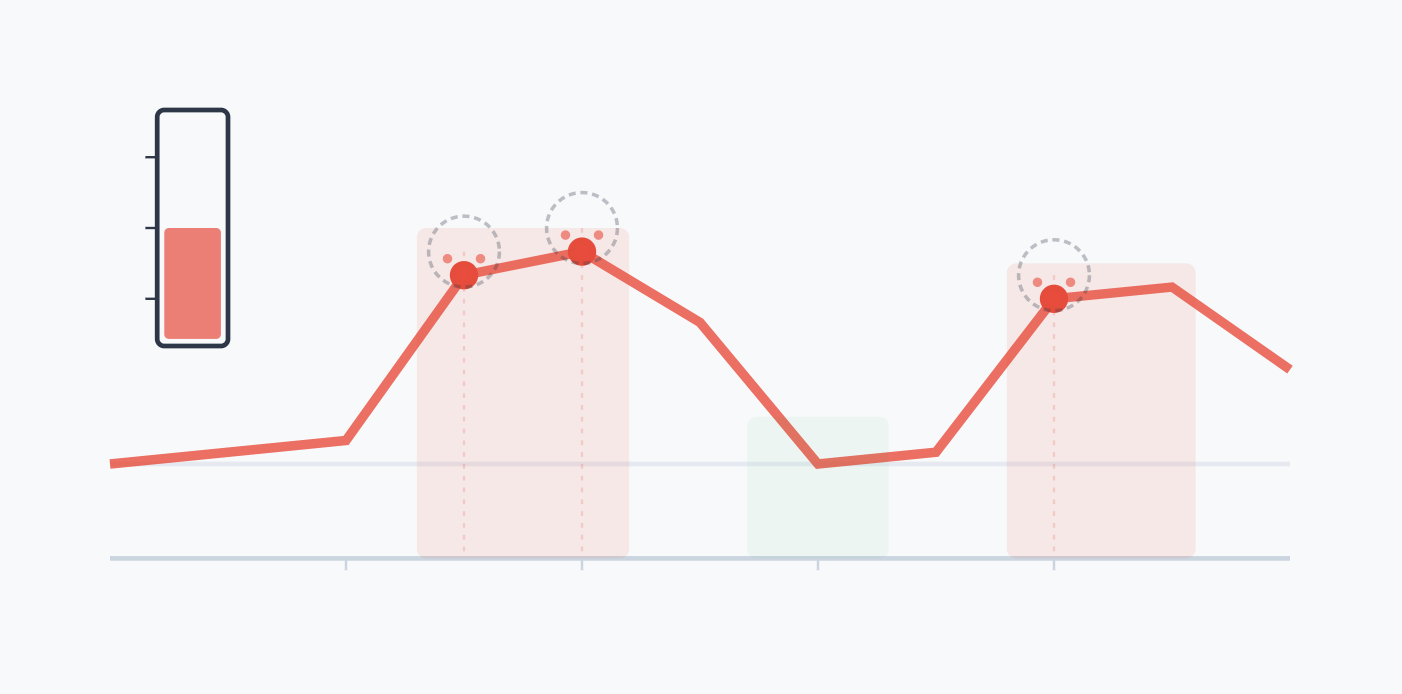

Search Grounding is Transient

There is a fundamental misconception about how Google’s AI search and Gemini chatbot process retrieved web content. It is widely understood that these systems use Retrieval-Augmented Generation (RAG) to search the web, pull snippets from pages, and ground their answers in factual data. However, there is a pervasive assumption that once an AI pulls in…

-

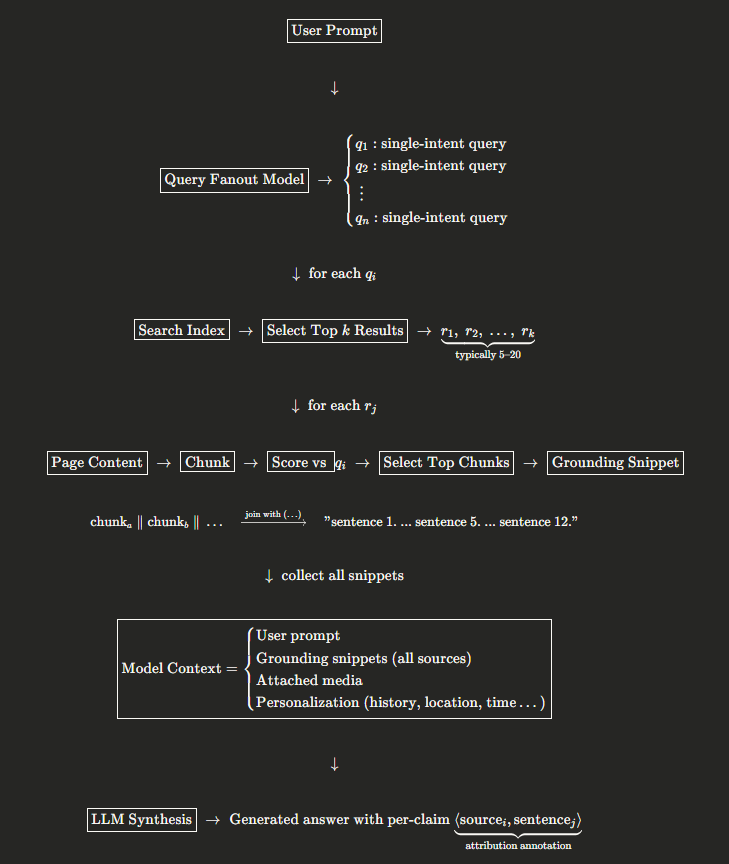

What extraction method is Google using to build grounding snippets?

I’ve been reverse-engineering Google’s Gemini grounding pipeline (AI Mode, Gemini Chat…etc) by examining the raw groundingSupports and groundingChunks returned by the API. Specifically, I’m interested in the snippet construction step, the part where, given a query and a retrieved web page, the system selects which sentences to include in the grounding context supplied to the…

-

Implicit Queries in AI Search

Back in 2015 I wrote about Google’s reliance of user behaviours signals for ranking purposes. In that article I already covered their use of implicit signals, but now there’s an update! While investigating Google’s grounding pipeline (the system that feeds web content to Gemini before it generates an answer) I came across the same patent…

-

Sorry Google, I was wrong.

What Happened I run several tools on the Gemini API. One of them is a grounded search analysis tool that works in two stages: Gemini 2.0 Flash does a Google Search grounded query, then Gemini 3 Pro visits each source page using the URL Context tool to classify its content. Through a strange coincidence, two…

-

Google’s Trajectory: 2026 and Beyond

AI is shifting from tool to utility. Agentic AI Becomes the Default Interface 2026 prediction: Expect Google Search to become agentic by default. Not “here are 10 links” – more like “I booked the restaurant, here’s the confirmation.” Operator-style functionality baked into Search and Gemini app. Gemini 4 Likely Late 2026 The pattern is clear:…