Category: Machine Learning

-

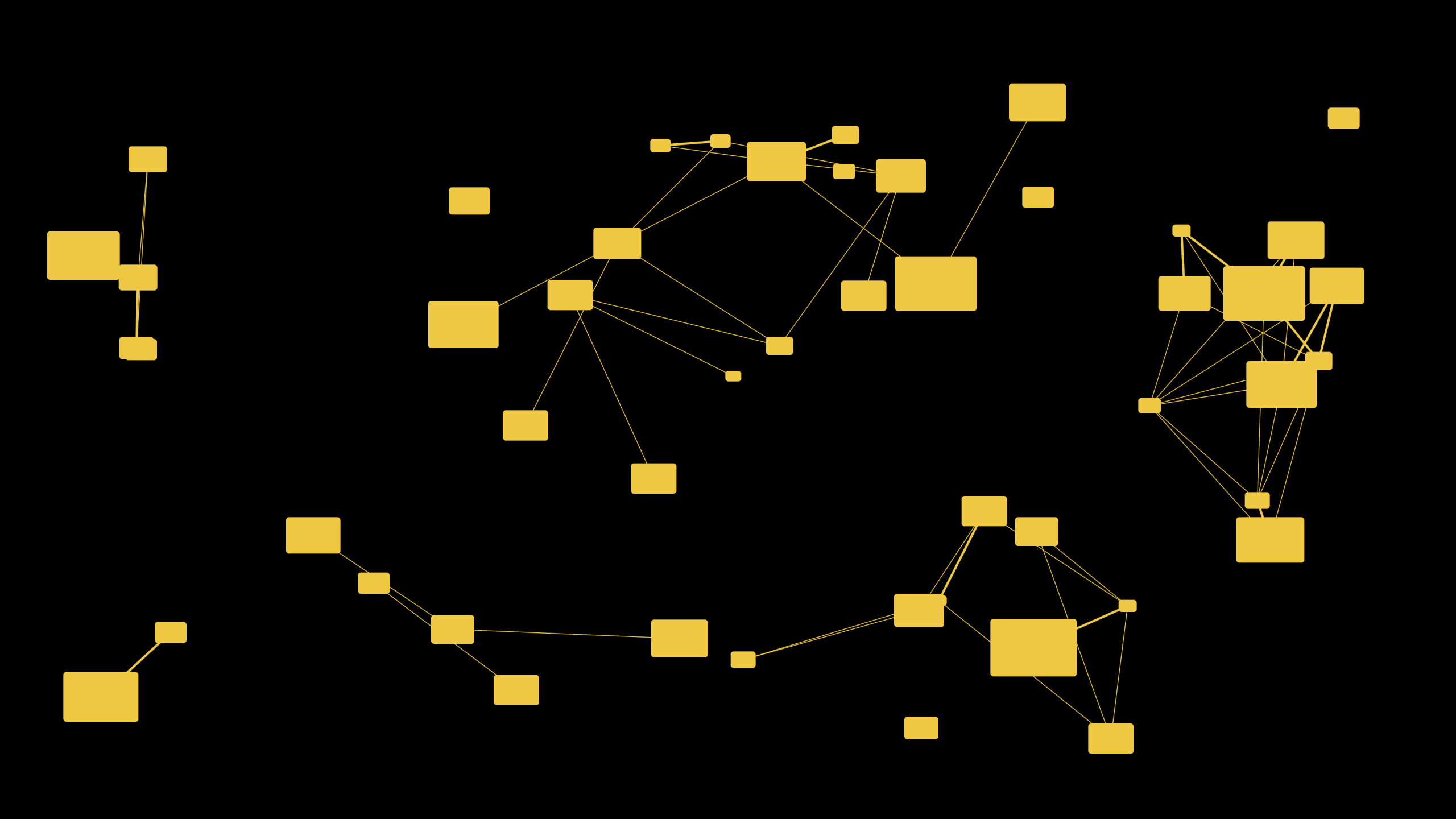

Analysis of Gemini Embed Task-Based Dimensionality Deltas

When generating vector embeddings for your text using Gemini Embed there are several embedding optimisation modes: For each one you get slightly different embeddings, each optimised for the task at hand. The embeddings for semantic similarity are the most unique from all other types while retrieval query, retrieval document and fact verification embeddings are most…

-

Dynamic per-label thresholds for large-scale search query classification with Otsu’s method

Solving the “Which Score Is Good Enough?” Puzzle The real-world problem Arbitrary label search-query intent classifiers spit out a confidence score per label.On clean demos you set one global cut-off say 0.50 and move on.In production: Manual tuning per label quickly turns into a never-ending whack-a-mole, especially when the taxonomy is customized client-by-client (e.g., SaaS…

-

Top 10 Most Recent Papers by MUVERA Authors

MUVERA Authors: 1. Laxman Dhulipala (Google Research & UMD) Top 10 Recent Papers (2023-2025) Research Focus Areas 2. Majid Hadian (Google DeepMind) Top 10 Recent Papers (2023-2025) Research Focus Areas 3. Jason Lee (Google Research & UC Berkeley) Top 10 Recent Papers (2023-2025) Research Focus Areas 4. Rajesh Jayaram (Google Research) Top 10 Recent Papers…

-

Training Gemma‑3‑1B Embedding Model with LoRA

In our previous post, Training a Query Fan-Out Model, we demonstrated how to generate millions of high-quality query reformulations without human labelling, by navigating the embedding space between a seed query and its target document and then decoding each intermediate vector back into text using a trained query decoder. That decoder’s success critically depends on…

-

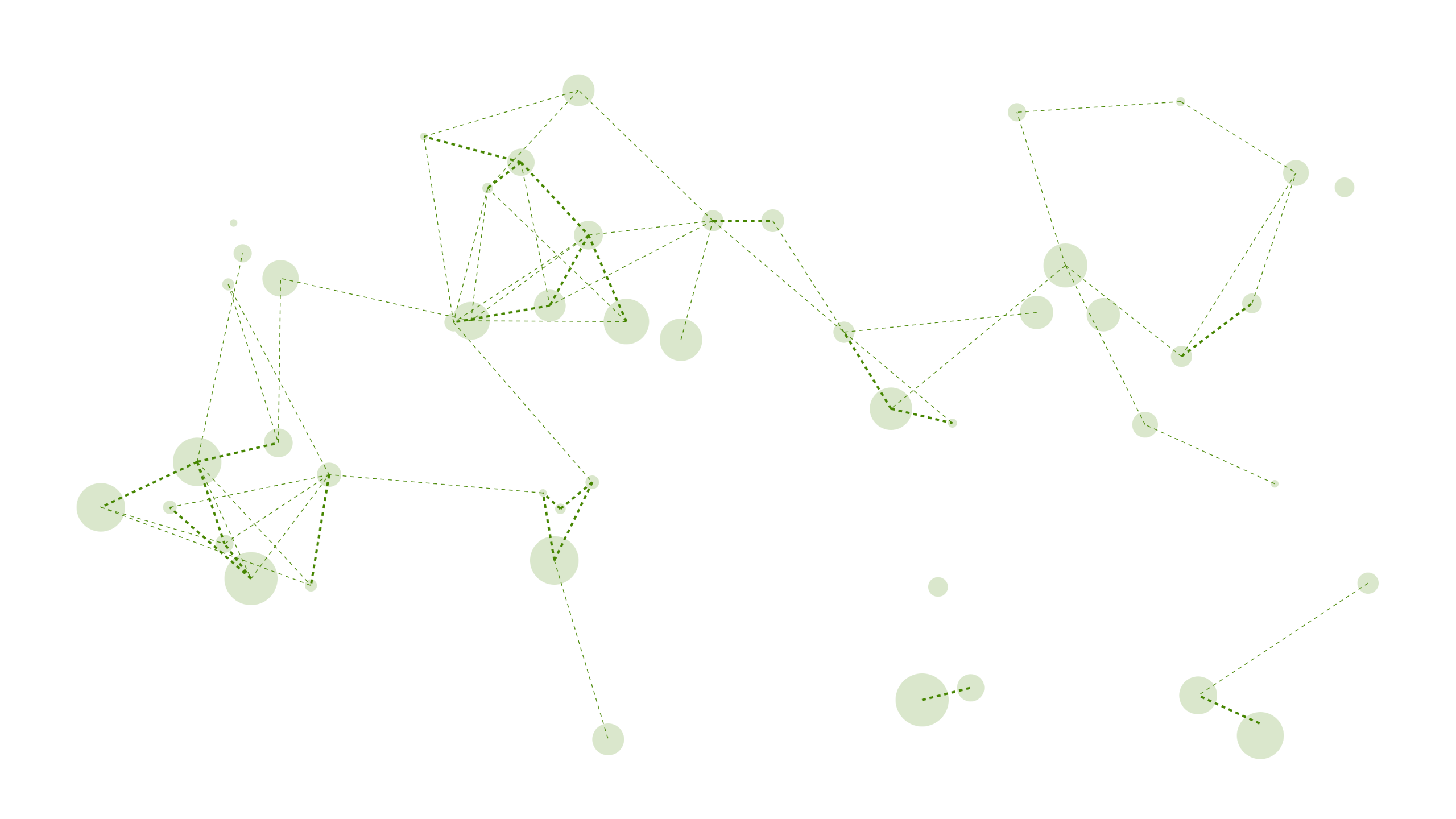

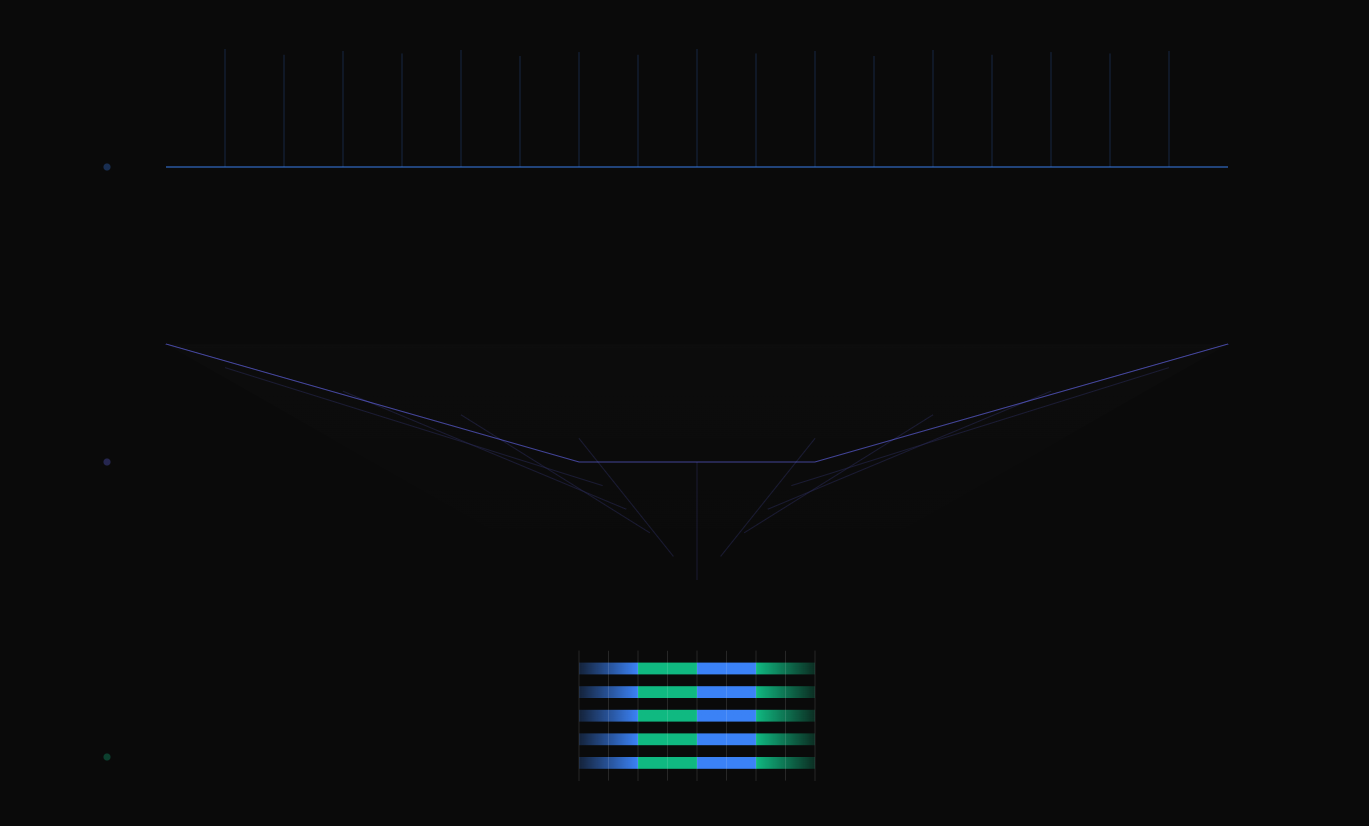

Training a Query Fan-Out Model

Google discovered how to generate millions of high-quality query reformulations without human input by literally traversing the mathematical space between queries and their target documents. Here’s How it Works This generated 863,307 training examples for a query suggestion model (qsT5) that outperforms all existing baselines. Query Decoder + Latent Space Traversal Step 1: Build a…

-

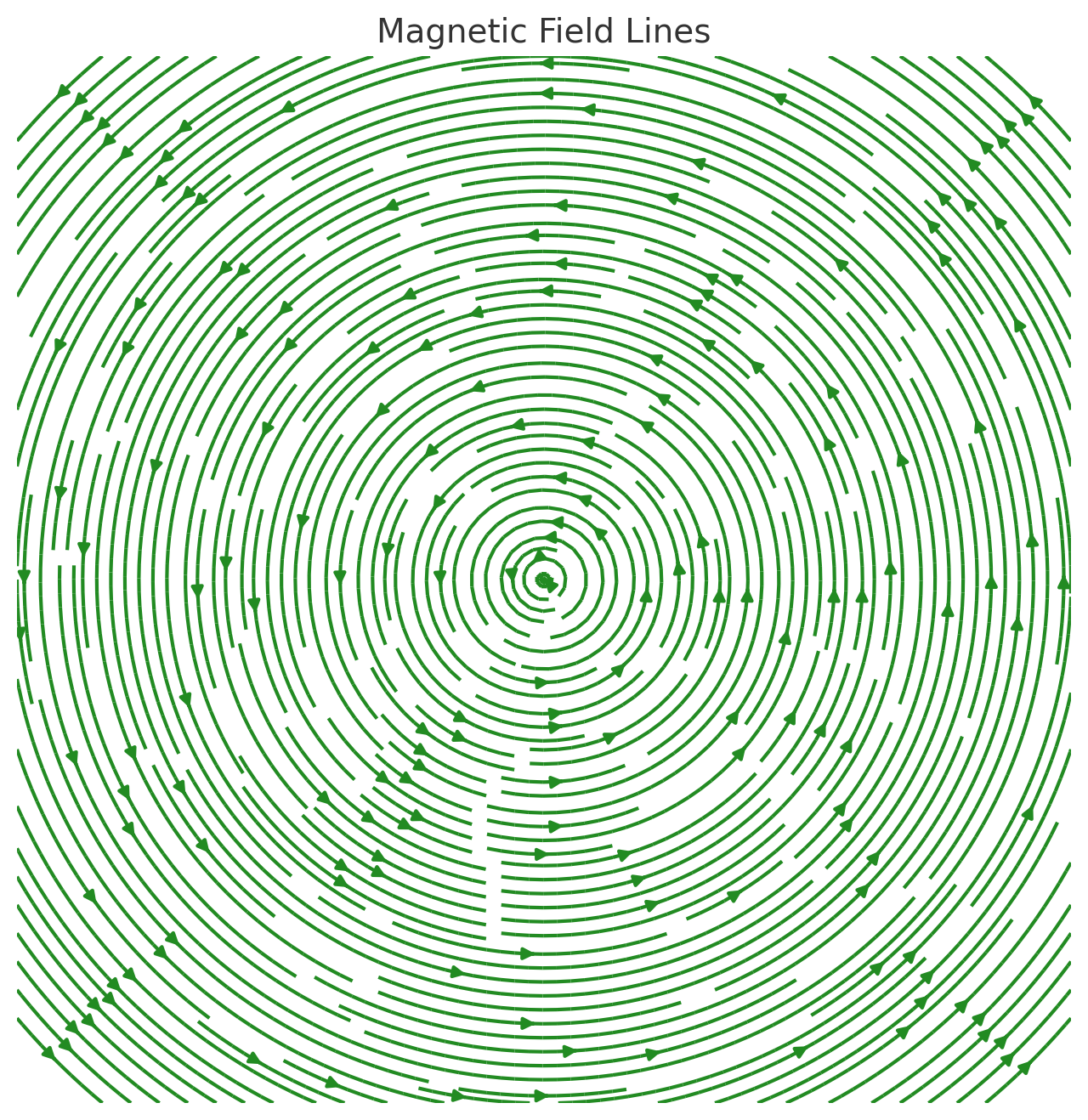

Cosine Similarity or Dot Product?

Google’s embedder uses dot product between normalized vectors which is computationally more efficient but mathematically equivalent to cosine similarity. How Googler’s work and think internally typically aligns with their open source code (Gemini -> Gemma) and Chrome is no exception. It’s why I look there for answers and clarity on Google’s machine learning approaches. After…

-

Universal Query Classifier

Generalist, Open‑Set Classification for Any Label Taxonomy We’ve developed a search query classifier that takes any list of labels you hand it at inference time and tells you which ones match each search query. No retraining, ever. Just swap in new labels as they appear. Old workflow Pain New workflow Build + label data + retrain…

-

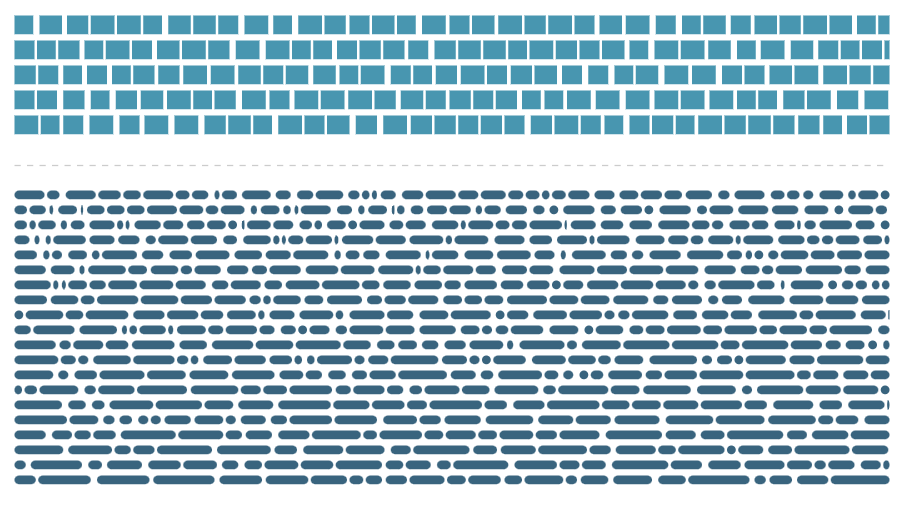

Vector Embedding Optimization

Embedding Methods Evaluation: Results, Key Findings, and a Surprising Insight On June 6, 2025, we ran a comprehensive evaluation comparing four different embedding methods—regular, binary, mrl, and mrl_binary—on a dataset of paired sentences. The goal was to measure each method’s speed, storage footprint, similarity quality, and accuracy against a ground-truth of sentence pairs. Below, we…

-

Live Blog: Hacking Gemini Embeddings

Prompted by Darwin Santos on the 22th of May and a few days later by Dan Hickley, I had no choice but to jump on this experiment, it’s just too fun to skip. Especially now that I’m aware of the Gemini embedding model. The objective is to do reproduce the claims of this research paper…

-

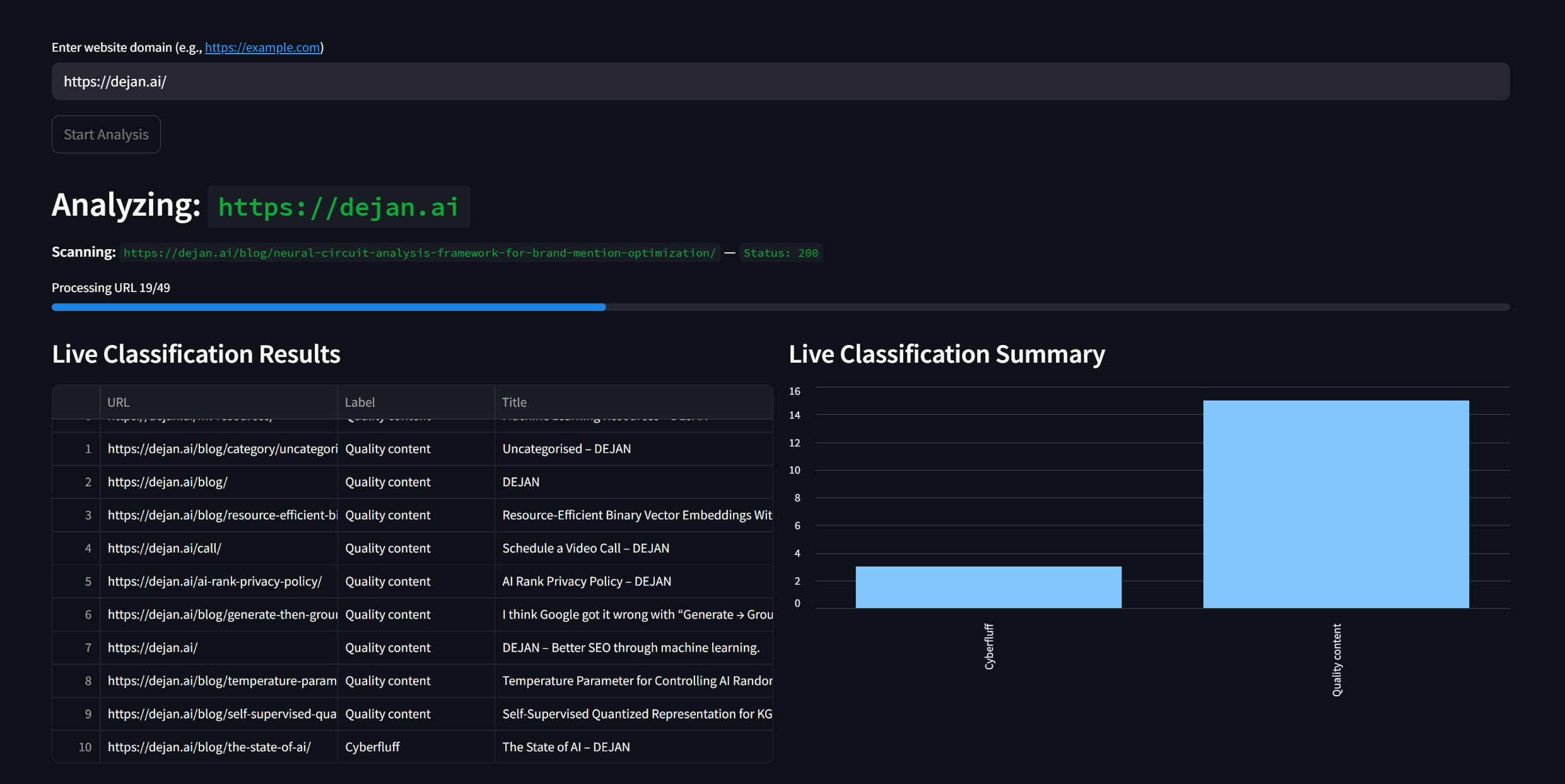

Content Substance Classification

Demo: https://dejan.ai/tools/substance/ Preface In 1951, Isaac Asimov proposed an NLP method called Symbolic Logic Analysis (SLA) where text is reduced to its essential logical components. This method involves breaking down sentences into symbolic forms, allowing for a precise examination of salience and semantics analogous to contemporary transformer-based NER (named entity recognition) and summarisation techniques. In…