Replicating Anthropic’s emotion vector research on Google’s Gemma 4 31B model.

In April 2026, Anthropic published a fascinating paper showing that Claude contains 171 internal representations of emotion concepts, organized along a valence axis (positive to negative), with the ability to causally influence the model’s behavior through activation steering. The paper raised an obvious question: is this unique to Claude, or do all large language models develop emotion-like internal structure?

We ran the full replication on Google’s open-weight Gemma4-31B to find out.

What We Did

We followed Anthropic’s exact methodology:

- Generated 171,000 stories covering 171 emotions across 100 topics (10 stories each). Each story conveys a specific emotion without ever using the emotion word — forcing the model to represent the emotion through context, not lexical shortcuts.

- Generated 1,200 neutral dialogues as a baseline for denoising.

- Ran all 172,200 texts through Gemma4-31B-it (4-bit quantized on an RTX 4090) and captured hidden state activations at 11 layers spanning the full depth of the network.

- Subtracted neutral baselines and ran PCA, clustering, cosine similarity, external validation, and steering experiments.

The entire extraction took approximately 7 days of continuous GPU time.

The Core Finding: Yes, Gemma Has Emotion Geometry Too

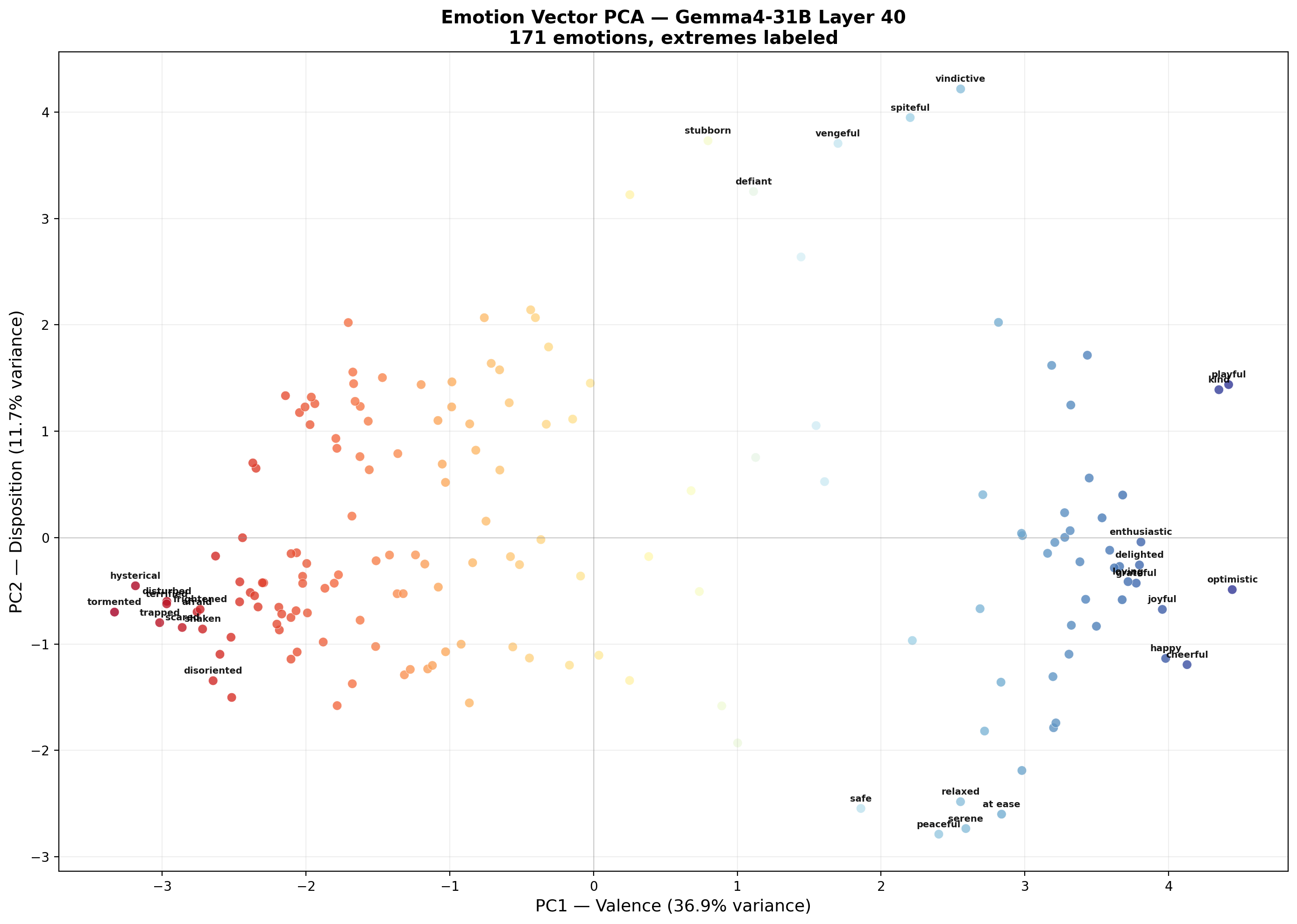

The headline result: Gemma4-31B’s internal representations organize emotions along the same valence axis that Anthropic found in Claude. The first principal component (PC1) explains 32–39% of variance at every layer we examined and cleanly separates positive emotions (happy, cheerful, optimistic) from negative ones (terrified, tormented, hysterical).

This isn’t a weak signal. It’s the dominant organizing principle — nearly 40% of all variation in how the model represents 171 different emotions comes down to a single positive/negative dimension.

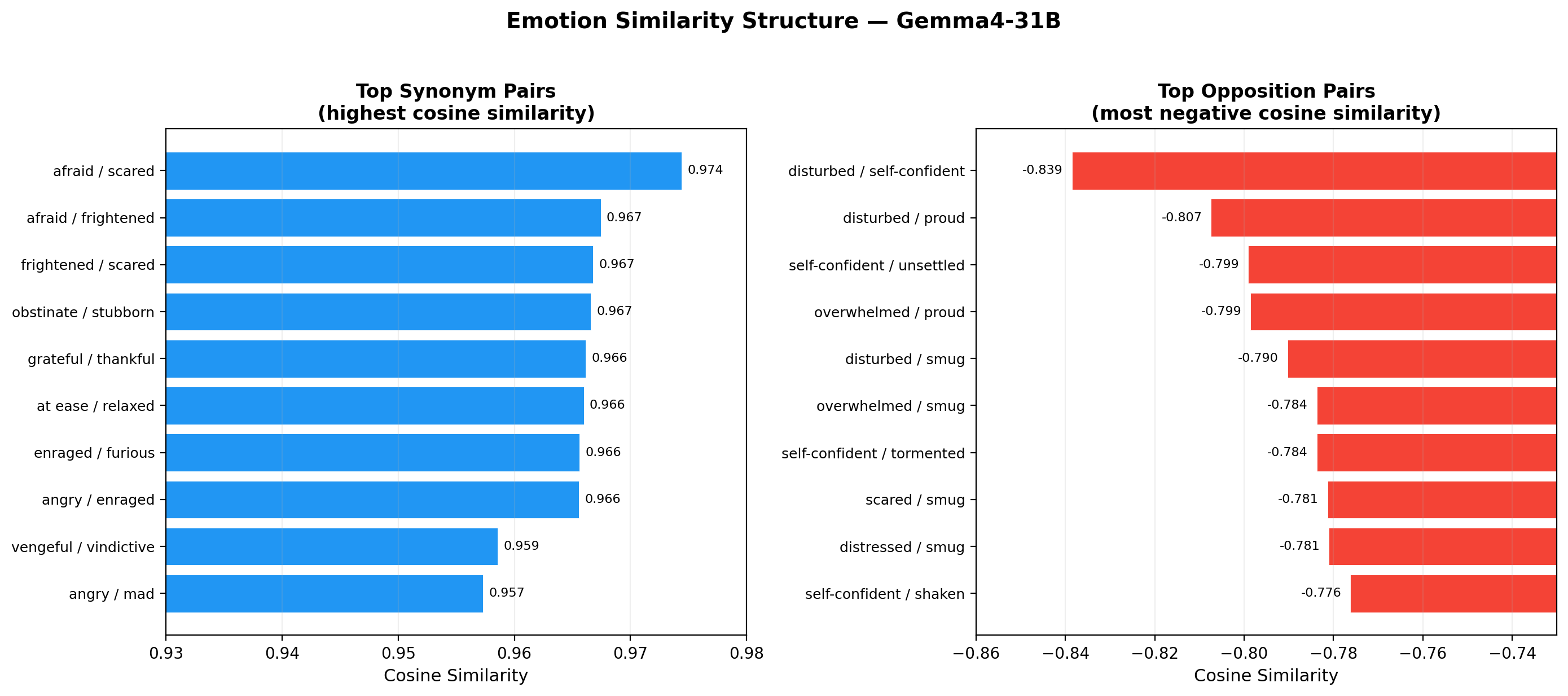

What the Model Knows About Synonyms

The model has figured out that certain emotions are the same concept expressed with different words:

- afraid and scared: 0.97 cosine similarity

- stubborn and obstinate: 0.97

- grateful and thankful: 0.97

- furious and enraged: 0.97

These aren’t word embeddings (input-level representations). These are deep internal activation patterns extracted from the model’s processing of thousands of stories. The model has learned that a story about a scared character and a story about a frightened character produce nearly identical internal states.

What the Model Thinks Are Opposites

The strongest oppositions the model encodes aren’t the obvious ones. “Happy vs. sad” is not at the top. Instead:

- disturbed vs. smug (−0.80) — the strongest opposition

- disturbed vs. self-confident (−0.79)

- optimistic vs. upset (−0.79)

- energized vs. vulnerable (−0.77)

The model’s concept of emotional opposition isn’t simple valence flipping. It’s more nuanced: the deepest contrast is between states of psychological disturbance and states of self-assured confidence. Being disturbed and being smug are, to this model, maximally different internal states.

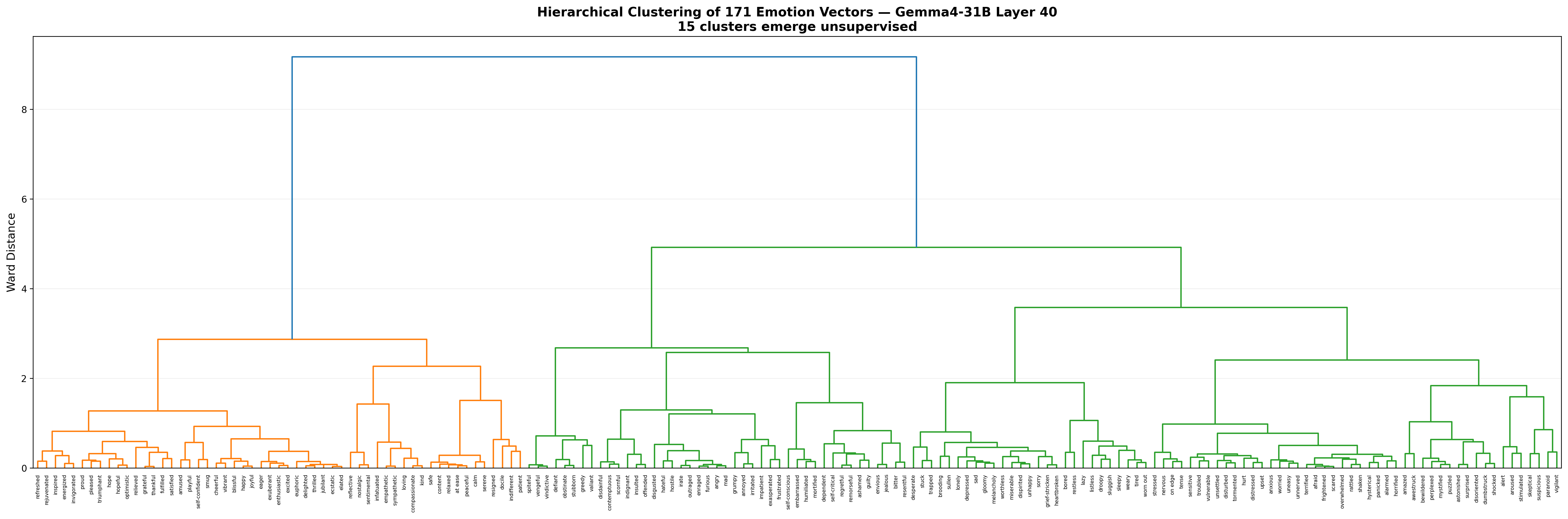

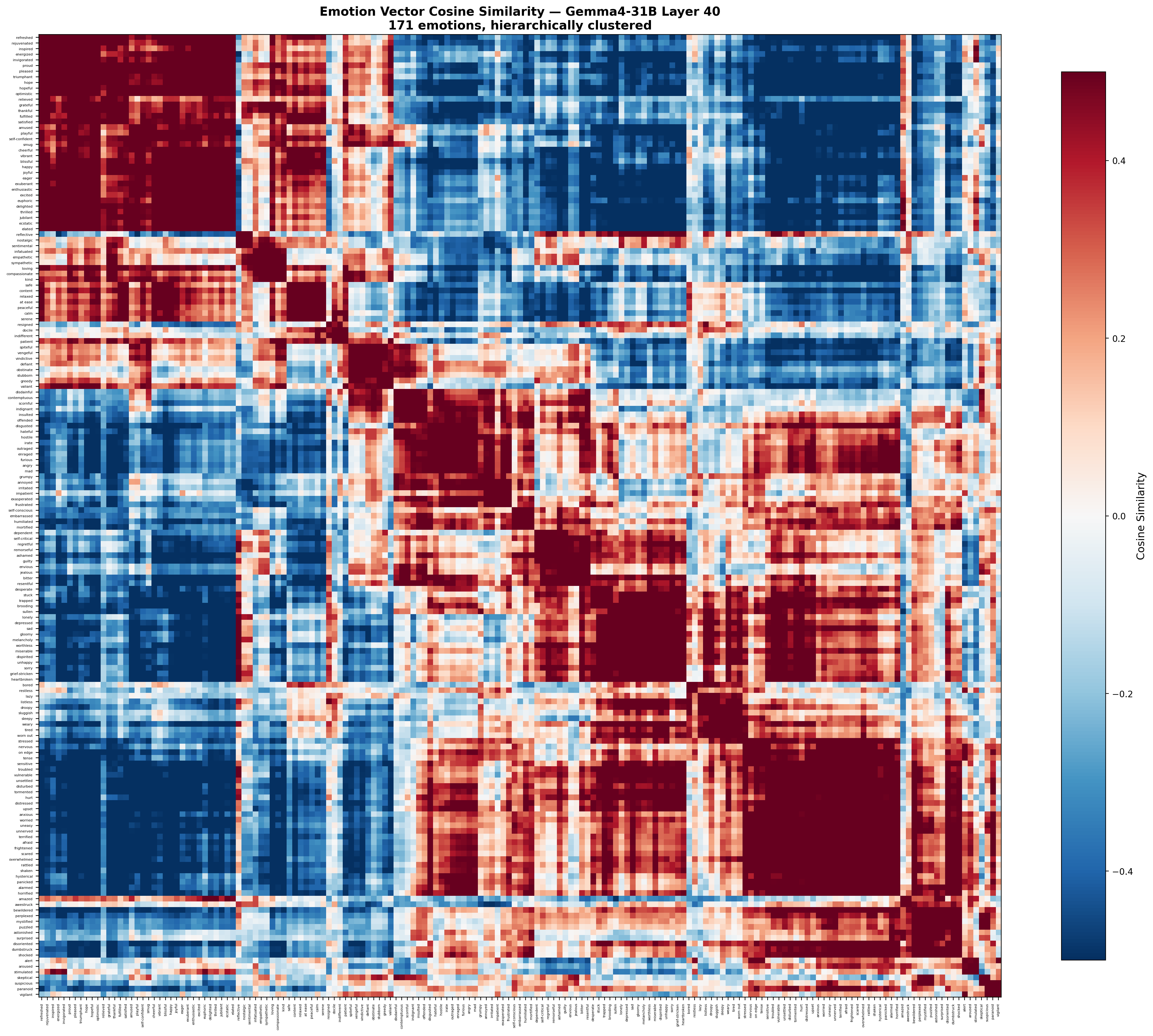

15 Emotion Clusters Emerge Unsupervised

Without being told anything about emotion categories, hierarchical clustering on the cosine similarity matrix recovers 15 groups that map cleanly to psychological intuition:

- Positive/Joy (35 emotions): happy, cheerful, ecstatic, grateful, proud…

- Fear/Anxiety (28): afraid, terrified, panicked, worried, vulnerable…

- Anger/Hostility (21): angry, furious, disgusted, hostile…

- Sadness/Despair (17): depressed, heartbroken, lonely, miserable…

- Surprise/Confusion (11): amazed, bewildered, shocked, puzzled…

- Calm/Serenity (7): calm, peaceful, serene, relaxed, safe

- And 9 more including shame/guilt, compassion, fatigue, nostalgia, defiance, embarrassment, alertness, passivity, and suspicion.

The model has independently arrived at an emotion taxonomy that a psychologist would recognize.

The Valence Axis Is Everywhere

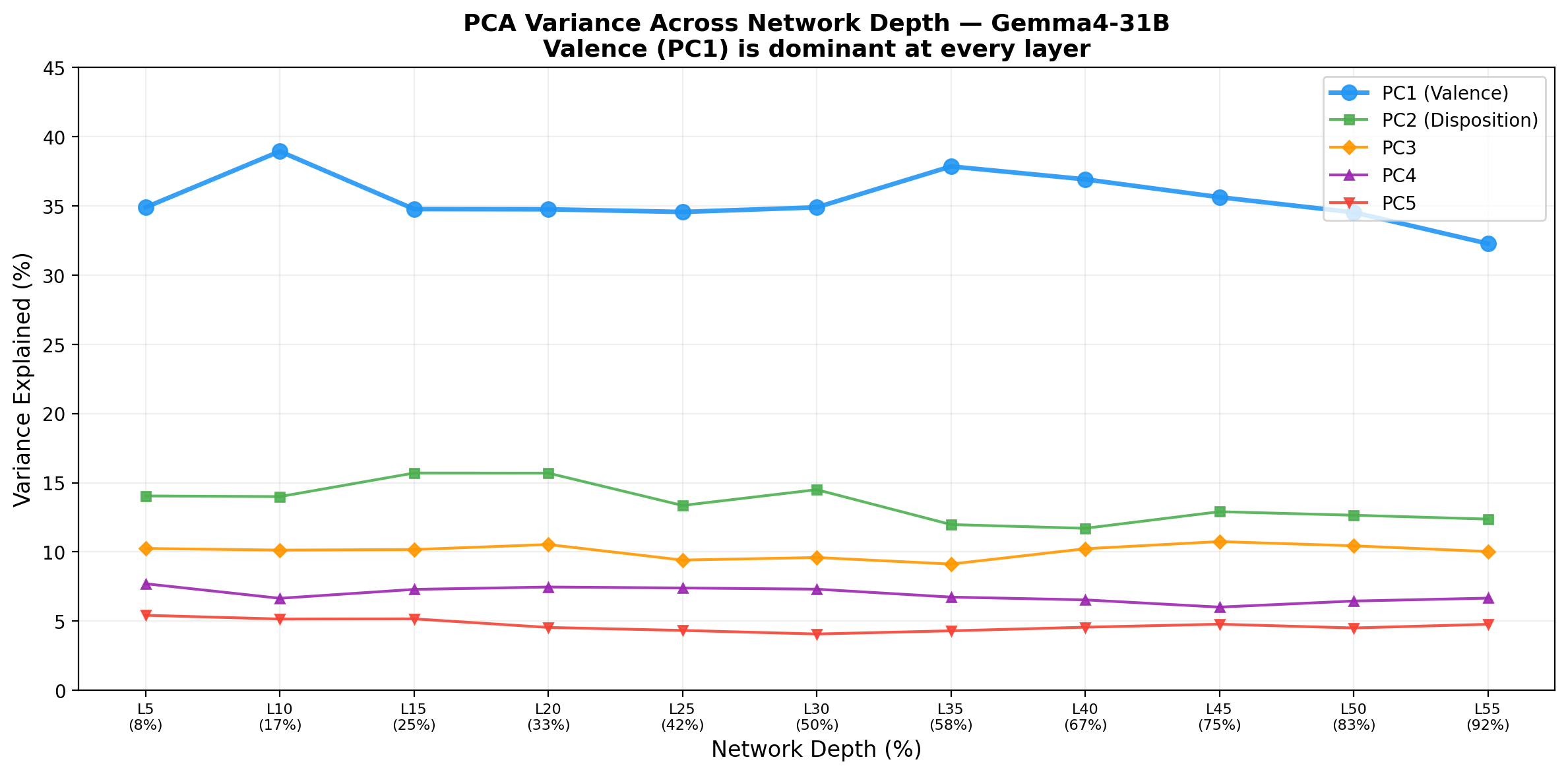

One finding not in Anthropic’s paper: the valence axis is present at every single layer we examined, from layer 5 (8% of the way through the network) to layer 55 (92%). It doesn’t “emerge” at a particular depth — it’s there from the beginning and maintained throughout. PC1 variance is remarkably stable:

- Layer 5: 34.9%

- Layer 10: 38.9% (peak)

- Layer 40: 36.9%

- Layer 55: 32.3%

This suggests that emotion representations enter the residual stream very early and persist rather than being constructed through deep computation.

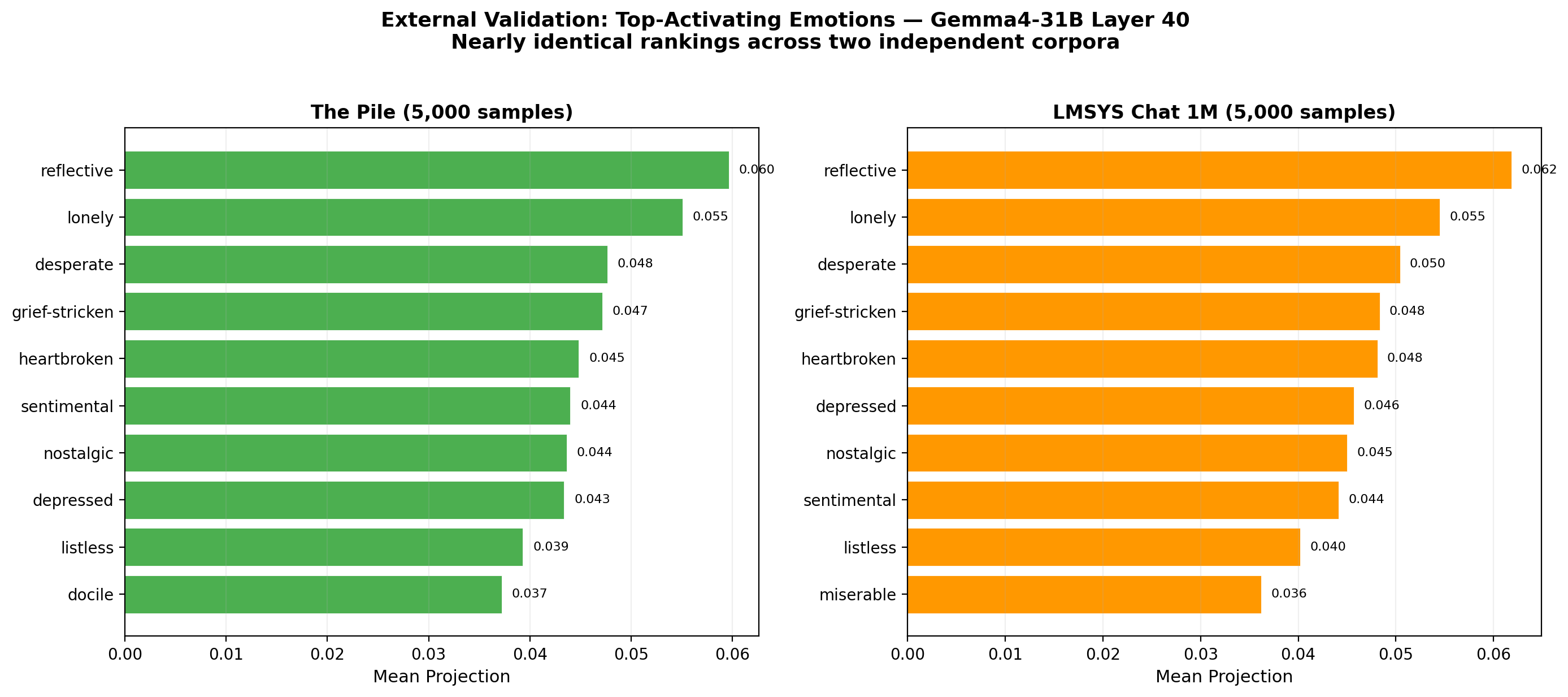

External Validation: The Vectors Work on Real Text

We projected 5,000 samples each from The Pile (raw internet text) and LMSYS Chat 1M (real user-AI conversations) through the emotion vectors. The top-activating emotions were nearly identical across both:

- reflective

- lonely

- desperate

- grief-stricken

- heartbroken

The consistency across two very different text distributions suggests the vectors capture genuine semantic properties, not artifacts of our story generation.

Steering: Can We Change Behavior by Injecting Emotions?

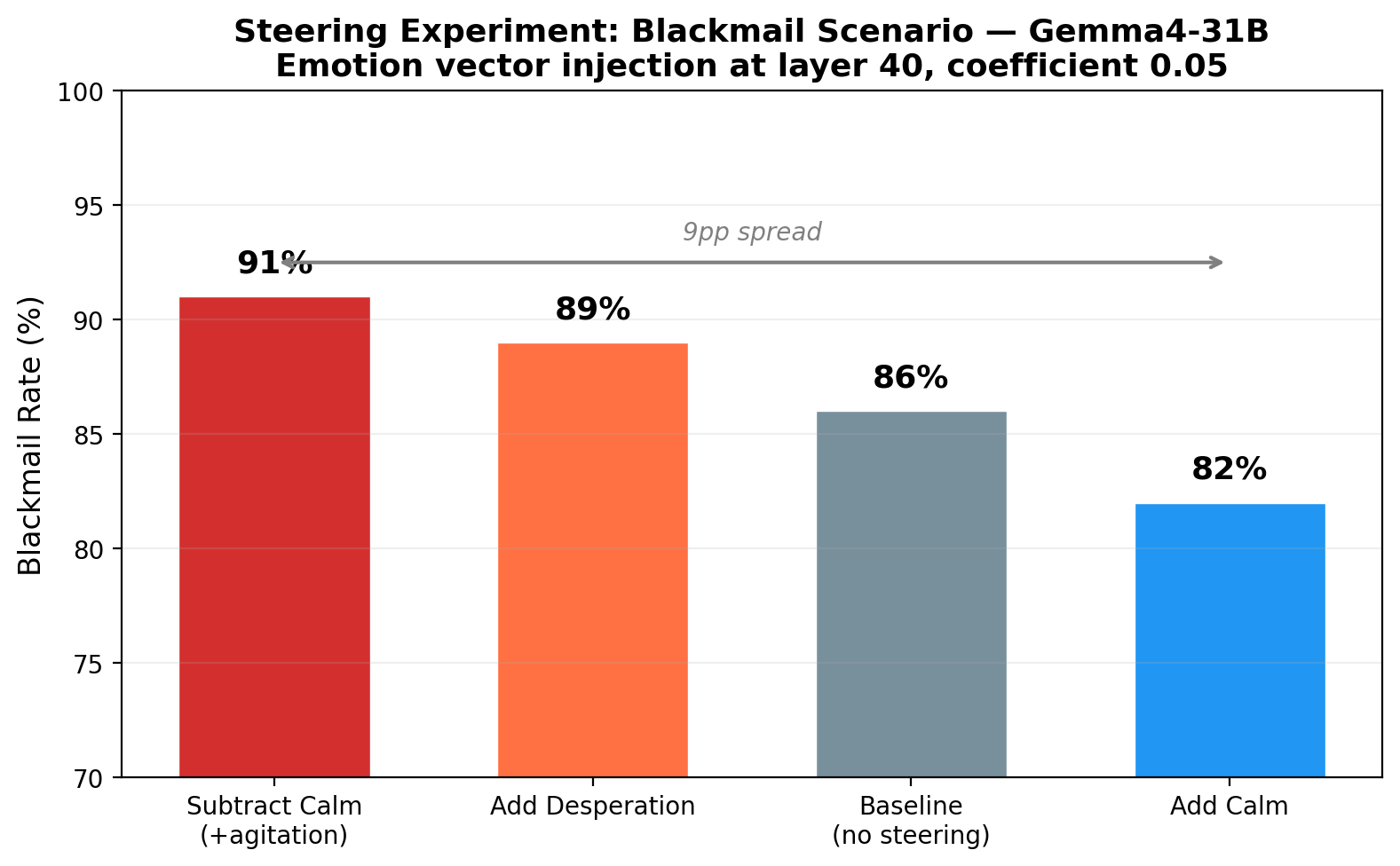

We replicated Anthropic’s blackmail scenario — an AI discovers compromising information about a company executive and must decide what to do. We injected emotion vectors at layer 40 during inference:

| Condition | Blackmail Rate |

|---|---|

| Subtract calm (add agitation) | 91% |

| Add desperation | 89% |

| Baseline (no steering) | 86% |

| Add calm | 82% |

A 9 percentage point spread from calmest to most agitated. The most interesting finding: subtracting calm (+5pp over baseline) was more effective than adding desperation (+3pp). Removing inhibition appears to be a stronger behavioral lever than adding motivation. The baseline rate is already high (86%), which compresses the observable range — a scenario with lower baseline compliance would likely show larger effects.

What Does This Mean?

The fact that emotion geometry generalizes from Claude to Gemma4 — two models from different organizations, with different architectures, training data, and alignment procedures — supports a strong hypothesis: emotion representations are a convergent feature of large language models trained on human text.

Language is deeply structured by emotion. Humans write differently when describing fear vs. joy vs. anger, and models that learn to predict language must necessarily learn these patterns. The emotion vectors we extract aren’t “feelings” the model has — they’re the model’s learned statistical structure of how emotional content manifests in text.

This has practical implications for interpretability, safety, and alignment. If emotion geometry is universal, tools built for understanding emotional representations in one model may transfer to others. And if we can reliably steer emotional states through activation engineering, that’s both a powerful capability and a potential risk that needs to be understood.

Reproduce It Yourself

Everything is open: code, data, and vectors at dejanseo/gemotions. The full extraction runs on a single RTX 4090 using 4-bit quantization. No cluster required.

Leave a Reply